Cablese

Maximum signal. Minimum context. That's context engineering, whether you were sending a telegram or now prompting an AI agent.

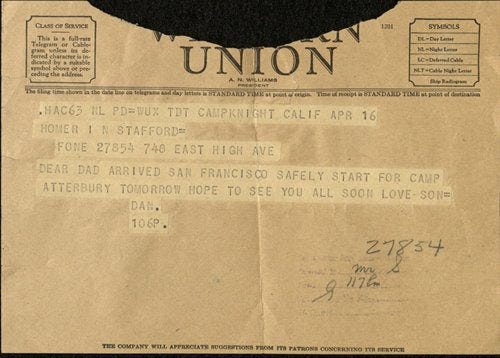

Cablese (coined ~1916) pronounced KAY-buh-leez, specifically described the compressed language used by journalists and diplomats sending dispatches over submarine cables. It was a discipline, not just shorthand.

Four words. An entire story. Loss, hope, grief, all in a sentence fragment that breaks every grammar rule.

Hemingway learned cablese as a young journalist. It shaped his entire literary style. Short sentences. No wasted words. The same constraint that made telegrams expensive made his prose legendary. Hemingway (allegedly) proved that meaning doesn’t live in syntax. It lives in the words you choose and the context your reader already carries.

“Late. Traffic. Sorry.” “Ship it.” “SOS.”

You understood all of those instantly. No subject-verb agreement. No articles. No auxiliary verbs. Your brain filled in the rest.

Linguists call this Economy of Language. AI teams call this Context Engineering. Content words carry the meaning. Grammar is scaffolding your brain doesn’t need when context is established.

This isn’t new. We solved this problem 150 years ago.

Cablese (1870s-1960s) → Telegraphic Style (SMS era) → Context Engineering (AI era)

Same discipline. Same constraint. Different medium.

The Telegraph Rewired Language

Telegrams were billed per word. Sending a message wasn’t like writing a letter. It was like buying real estate. Every word cost money.

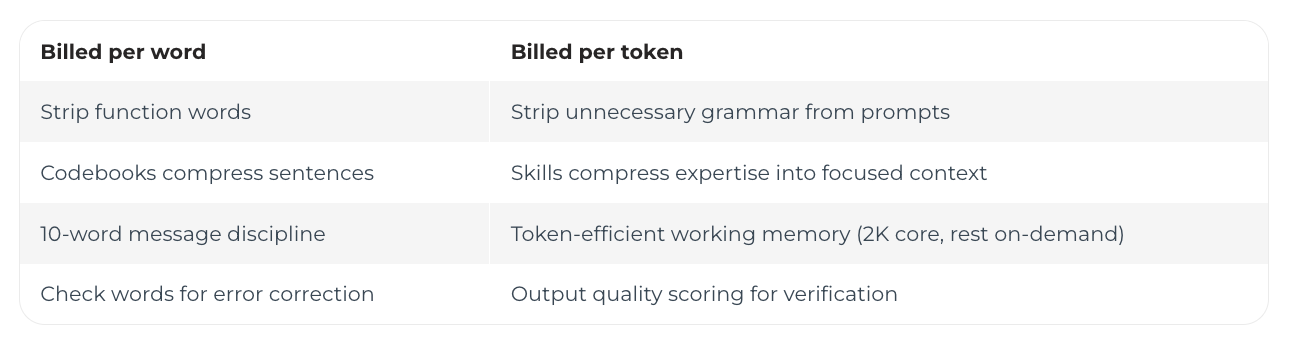

People didn’t just shorten sentences. They reinvented language through four optimization layers.

Layer 1: Syntactic Stripping. Articles, pronouns, auxiliary verbs were the first casualties. “I am arriving at the station at six o’clock” became “Arriving station six.” 60% cost reduction. Zero information lost.

Layer 2: Commercial Codebooks. Businesses published dictionaries where one code word replaced entire sentences. In the ABC Universal Commercial Telegraphic Code, “ADOPTER” meant “The goods have been shipped and are expected to arrive on the 15th.” One word replaced fifteen. 93% compression. The 19th-century .zip file.

Layer 3: The Ten-Word Strategy. Western Union charged a base rate for 10 words, with steep jumps after. This created a cultural unit of thought. People became masters of fitting an entire message into exactly 10 words.

Prioritize the lead. Kill the fluff.

Layer 4: Error Correction. Aggressive compression meant one typo could change everything. Operators added “check words” (the sum of transmitted numbers) as verification. A human checksum.

Now Replace “Word” With “Token”

AI agents are billed per token. The economics rhyme.

But token cost is the surface concern. The deeper issue is what earns a seat in working memory.

Working Memory: The Real Constraint

George Miller’s 1956 research found humans hold roughly 7 items in working memory at any time. Everything else lives in long-term memory, available but not active.

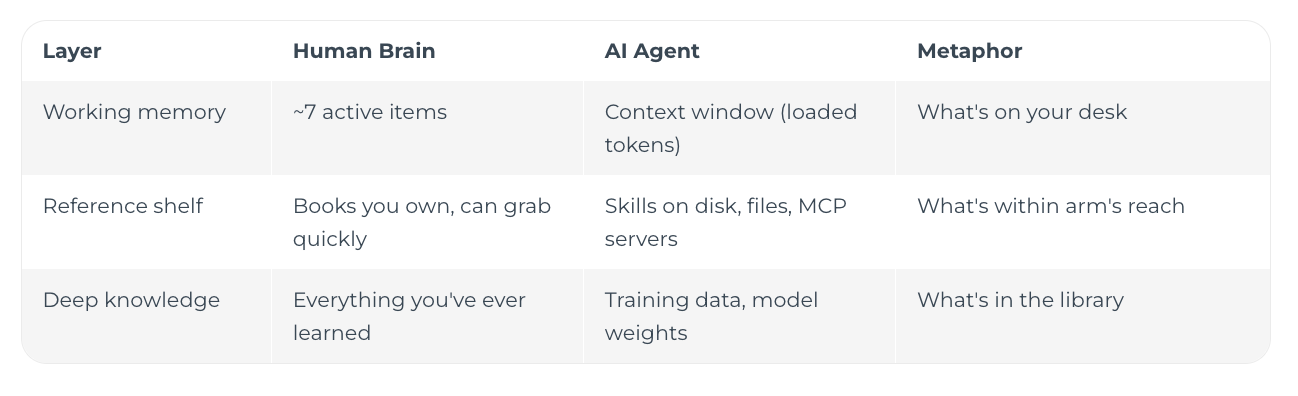

AI has the same architecture with different labels:

Your brain doesn’t try to hold every fact you’ve ever learned in working memory while solving a math problem. It loads what’s relevant, keeps the rest accessible, and lets deep knowledge fill gaps automatically.

AI should work the same way.

A CLAUDE.md at 11,000 tokens is like trying to hold 47 things in your head while doing arithmetic. Your brain can’t. Neither can the context window. Not because it runs out of space, but because priority degrades. The 11,000th token gets less attention than the 1st.

The fix: treat context like working memory.

Core rules always loaded (2K tokens). Your desk. The few things you need for every task.

Skills loaded on-demand (0-10K depending on task). Your reference shelf. Grab the security book when reviewing auth. Grab the migration guide when changing schema. Put it back when you’re done.

Deep knowledge is always there. Claude’s training data handles the rest, the same way your long-term memory fills in “what is a for-loop” without you consciously loading it.

Economy of language applied to AI context: not what can you fit, but what deserves a seat in working memory.

The Science: Why Fragments Work

Lexical Semantics. Content words carry the vast majority of information. “Fire! Door! Run!” communicates the same survival instruction as the full sentence with articles and prepositions.

Predictive Processing. Your brain is a prediction engine. A waiter says “Check?” and your restaurant schema fills in the full meaning automatically. One word, complete communication.

The N400 Response. Psycholinguists measure a brain wave that spikes 400 milliseconds after processing a semantically unexpected word. Your brain is constantly predicting what comes next from incomplete input. Partial data isn’t the exception. It’s the default operating mode.

AI agents arrive at the same functional result through a different mechanism. Humans use predictive processing and schemas. LLMs use attention over context windows. Both extract meaning from fragments when context is established.

The Parallel

“fix auth bug, add tests, push” is a telegram. Nine words. Claude understands it the way a telegraph operator understood “Arriving station six.”

“Merge. Deploy. Monitor.” Three words. An entire deployment pipeline.

How This Connects

Economy of Language + AI Lingo = Context Engineering.

AI Lingo gives you the right keywords (”rigorous,” “first-principles,” “steelman”). Economy of Language strips the grammar around them. Together: maximum signal, minimum working memory consumed.

One caveat: brevity works when context is established. “fix auth” is ambiguous in isolation. With a CLAUDE.md providing project context, it’s perfectly clear. The telegrams worked because sender and receiver shared context. Same principle.

The Bottom Line

The telegraph operators figured out something we’re relearning: when every word costs money, you get very good at knowing which words matter.

But the deeper lesson isn’t about cost. It’s about attention.

What you load into working memory, whether it’s your brain or a context window, determines the quality of what comes out.

Choose carefully what earns a seat at the desk. Let the reference shelf and deep knowledge handle the rest.

Stop writing essays to your AI. Start treating every token like a telegram word.